In my last post, I asked Claude Code to modify an existing program. This time, I had it write one from scratch.

(more…)Posts Tagged ‘llm’

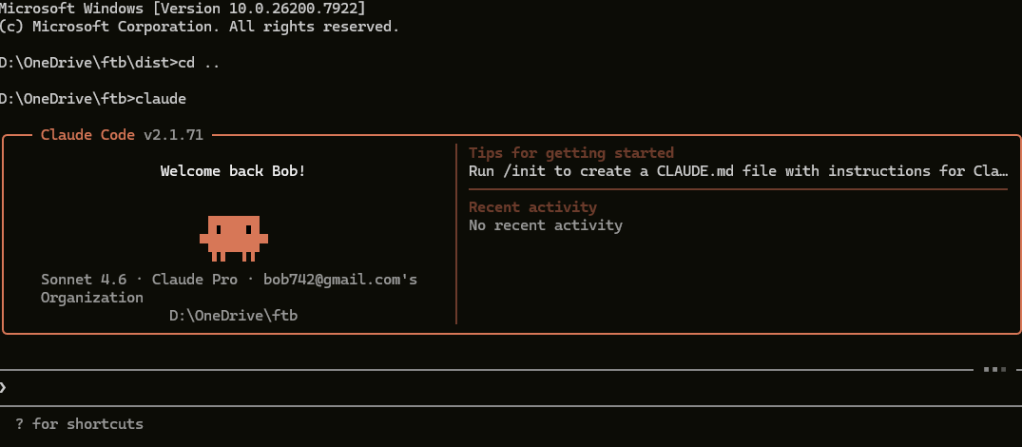

Writing Code from Scratch with Claude Code

Posted in ai, Still-coding, tagged ai, artificial-intelligence, Claude code, llm, Technology on March 15, 2026| Leave a Comment »

Claude Code, Cowork, and a Codex Correction

Posted in ai, Still-coding, tagged ai, artificial-intelligence, Claude, Claude code, Claude Cowork, Codex, llm, Technology on March 10, 2026| Leave a Comment »

Since my last post I’ve been using Claude Code more, and I’m pleased to report it works well and is genuinely powerful. As a confirmed control freak, I was also pleased to find that I stayed in control throughout.

(more…)Antigravity or Chrome code?

Posted in ai, Still-coding, tagged ai, Antigravity, artificial-intelligence, ChatGPT, Claude, Claude code, Coding risk management, Gemini, llm, OpenAI, Technology on March 1, 2026| Leave a Comment »

After spending a year building fintechbenchmark.com the traditional way—writing code, testing it, iterating—I’d grown comfortable with GitHub Copilot as my AI assistant. It delivered a significant productivity boost, though I’d learned when to trust it and when to ignore its suggestions and push forward on my own.

Then, weeks before our soft launch, our lead contractor dropped a demo of Google Antigravity (Project IDX) that made our entire workflow look like stone tools and campfire stories.

(more…)The Hidden Economics of AI: Why Bigger Isn’t Always Better

Posted in ai, tagged ai, llm, slm on February 26, 2026| Leave a Comment »

The assumption that bigger AI models always deliver better results is being quietly dismantled — and the economics behind this shift are compelling. Now we have Small Language Models (SLM) to distinguish them from Large language Models (LLM). How do we measure the size of a model?

(more…)Structured Outputs: The JSON-Only Revolution

Posted in Still-coding, Fintech, ai, tagged ai, artificial-intelligence, ChatGPT, Claude, json, llm, OpenAI, Technology on February 13, 2026| Leave a Comment »

When I was seeding the database for fintechbenchmark.com with Claude-generated or Openai-generated content, I needed structured data that matched my database schema. My format of choice was JSON, and my approach was straightforward:

- Request JSON in the prompt

- Provide an example of the desired structure

- Parse Claude’s response—a text string containing JSON wrapped in markdown code blocks with triple backticks

What is MCP and why is it important

Posted in ai, Database Management Systems, Still-coding, tagged ai, artificial-intelligence, Database, Fintech, llm, MCP, Technology on February 9, 2026| Leave a Comment »

MCP (Model Context Protocol) solves a fundamental problem: how do you give an AI model access to external data like databases, files, or APIs without embedding everything in each request? Introduced by Anthropic in November 2024, MCP is now mature enough (15+ months old) for serious production use. Think of it like a USB-C port for AI—a standardized way to connect AI models to external systems.

(more…)How We Added AI Search to Our Fintech Directory (And What We Learned)

Posted in ai, Still-coding, tagged ai, artificial-intelligence, Chatbase, Chatbot, ChatGPT, llm, Technology on January 1, 2026| Leave a Comment »

When we planned fintechbenchmark.com (currently in beta), we realized that AI wasn’t optional—it was essential. We could either embrace this revolution or watch competitors leave us behind.

We faced a classic startup dilemma: build a custom AI solution (expensive and slow) or use an off-the-shelf product to launch quickly and learn what our visitors actually need. We chose the pragmatic route.

(more…)